Embedded BLE112 - A UART and Watermark Example

My first post (here) dealing with the BLE112 module details my problems with getting I2C up and running. This post talks about some of the problems I’m having getting the UART functionality going… However, like the earlier post, I’ll show some of the steps to get a working example and keep updating this page as I learn more.

BLE112 BGScript code

I’ll keep this one shorter than the last and just show my code, as well as the problematic statements (as well as outputs).

Hardware.xml

<?xml version="1.0" encoding="UTF-8" ?>

<hardware>

<sleeposc enable="true" ppm="30" />

<usart channel="1" alternate="1" baud="115200" endpoint="none" mode="uart" flow="false" />

<sleep enable="false" />

<usb enable="false" endpoint="api" />

<txpower power="15" bias="5" />

<script enable="true" />

</hardware>

The line of code that absolutely clobbered me was <sleep enable=“false” />

Without that line of code, I was only getting the rx_watermark event thrown every few seconds (instead of every 100ms), and the data was very error prone. I’d say the data was wrong about 30% of the time (absolutely unacceptable).

From what I understand from the documentation, usart flow must be false if you intend

to use 2-wire RX/TX style UART. If

flow="true", then I believe you need to use the RTS/CTS lines as

well.

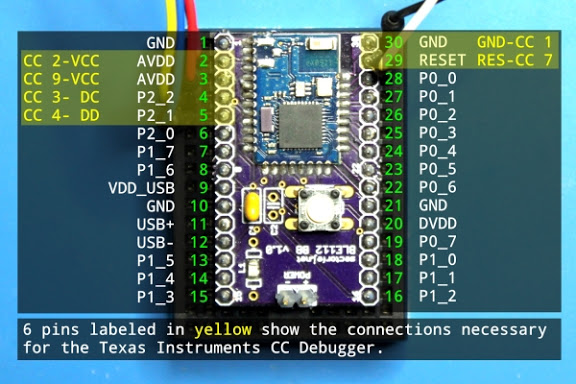

Oh, and just because I’ve never seen it in an example online yet, I’ll also mention that <usart channel=“1” alternate=“1” …> corresponds with P0_4 (TX) and P0_5 (RX) (according to the Version 1.26 - July 2012 BLE112 datasheet). If you’re using the same breakout board as me, then this diagram is incredibly useful (taken from Jeff Rowberg’s page). P0_4 and P0_5 are pins 23 and 24 from this board.

BGScript - Setting up watermarks

To use the UART, you need to first set up the endpoint’s watermarks. Since I’m using UART1, my endpoint is system_endpoint_uart1, and to set it up, I simply make this call in the system_boot:

call system_endpoint_set_watermarks(system_endpoint_uart1, 10, 0)

This call will enable events for the rx_watermark when 10 bytes of data have been sent in, and

the tx_watermark is disabled (as I don’t need it). If I didn’t know the state of the TX

watermark, and didn’t want to mess with it, instead of 0, I would send in $ff.

BGScript - Watermark event

This is where the magic happens. I will again make the disclaimer that I don’t know if EVERYTHING I’ve written is necessary, but it works for me.

dim in(10)

dim in_len

dim result

# System endpoint watermark event listener

# Generated when there is data available from UART

event system_endpoint_watermark_rx(endpoint, size)

if endpoint = system_endpoint_uart1 then

in_len = size

call system_endpoint_set_watermarks(system_endpoint_uart1, 0, $ff) # disable RX watermark

call system_endpoint_rx(system_endpoint_uart1, in_len)(result, in_len, in(0:in_len)) # read data from UART

call system_endpoint_set_watermarks(system_endpoint_uart1, 10, $ff) # enable RX watermark

call attributes_write(xgatt_test, 0, in_len, in(0:in_len)) # Write data to GATT

end if

end

The big thing to note here is that I check to make sure if the endpoint is the one I’m interested in. Personally, I’m not using the other endpoints, so this is unnecessary, but it could end up burning someone down the road.

Otherwise, the next order of business is to disable watermarks before reading the buffer (I read somewhere that this is necessary to avoid some nasty issues). I re-enable them after I read from the RX buffer.

Previously, I had an if check to make sure the size of the received data was less than 20 bytes, because I couldn’t send more than that to the GATT. I eliminated that code because it never happens in my system and I was trying to reduce BGScript lines (another reason I should drop my other if check).

Results

After figuring out my sleep enable mistake and getting the BGScript watermarks working, things were looking on the up and up. I was receiving events at the rate I expected them, I was no longer getting spurious data… Life was good…

Unfortunately, that only lasted for a few minutes.

Using BLEGUI, I looked at the real values I was getting, and they were all wrong… But they were consistently wrong. In fact, everything looked bit-shifted and array-shifted (my watermark was around 4-5 bytes).

I would send: h e l l o and I would receive: l l o h e (after I bit-shifted the data once, before that, it was gibberish)

When I upped the number of bytes I sent (and my watermark), the pattern fell apart slightly, but again, it feels wrong in a pattern.

Now, I send: 01 02 03 04 aa bb cc dd ee ff and I receive: 02 04 06 08 54 76 98 ba dc fe

So, definitely wrong, but as I said, feels wrong in a pattern.

Anyways, that’s where I’m up to today. Tomorrow, I’ll take Jeff’s suggestion and try reducing my transmit speed to see if I’m not getting some buffer overwrite errors. Apparently the “none”-endpoint doesn’t use double buffering; meaning there exists the possibility that a high enough UART speed could end up overwriting values in the circular UART buffer.

Hopefully I’ll have some good updates in the near future…

UPDATE:

Good news everyone! I throttled back the UART communication speed to 9600 (instead of 115200. in the hardware.xml of the BLE112 (and on my transmitting device) and now my data transmits accurately and reliably (I’m only updating at 1Hz intervals for testing, so I’m not really pushing this system).

That change means that there was some buffer-overwriting action going on. Hopefully the BlueGiga guys will get the buffering fixed up when using BGScript so that we can crank up the transmit speed next time around.